In today's testing world there is good news and bad news. The good news is that, more and more, testers are being asked to evaluate the quality of object-oriented analysis and design work earlier in the development process. The bad news is that most of us do not have an extensive background in the object-oriented paradigm or in UML (Unified Modeling Language), the notation used to ocument object-oriented systems.

This is the first in a series four of articles written to

- introduce you to the most important diagrams used in object-oriented development (use case diagrams, sequence diagrams, class diagrams, and state-transition diagrams)

- describe the UML notation used for these diagrams

- give you as a tester a set of practical questions you can ask to evaluate the quality of these object-oriented diagrams

We will use three independent approaches to test our diagrams:

- syntax

"Does the diagram follow the rules?" - domain expert

"Is the diagram correct?" "What else is there that is not described in this diagram?" - traceability

"Does everything in this diagram trace back correctly and completely to its predecessor?" "Is everything in the predecessor reflected completely and correctly in this diagram?"

For this set of articles we will use a case study: a Web-based, online auction system that I invented: F-LAKE. Yes, I invented the idea of online auctions. I'm not sure why F-LAKE never caught on.

Use Cases

A use case is a scenario that describes the use of a system by an actor to accomplish a specific goal. What does this mean? An actor is a user playing a role with respect to the system. Actors are generally people although other computer systems may be actors. A scenario is a sequence of steps that describe the interactions between an actor and the system. The use case model consists of the collection of all actors and all use cases. Use cases help us

- capture the system's functional requirements from the users' perspective

- actively involve users in the requirements-gathering process

- provide the basis for identifying major classes and their relationships

- serve as the foundation for developing system test cases

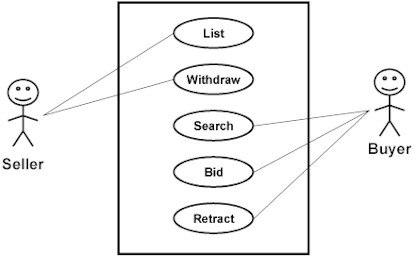

The UML notation for use cases is

The rectangle represents the system boundary, the stick figures represent the actors (users of the system), and the ovals represent the individual use cases. Unfortunately, this notation tells us very little about the actual functionality of the system.

Alistair Cockburn has proposed the following format for defining the details of each use case, which I have used on a number of projects. I've included an example use case for F-LAKE: "Bid On An Item."

| User Case Name | Bid on an Item | |

Context Of Use | Bidder wants to purchase an item | |

| Scope | Systems | |

| Level | Primary task | |

Primary Actor | Bidder | |

Stakeholders and Interests | Seller, FLAKE.com | |

Preconditions | None | |

Success End Conditions | Bid has been processed against the item | |

Failed End Conditions | Bid has not been processed; item and its current bid price remain unchanged | |

Trigger | Bidder enters a bid into FLAKE | |

Description | Step | Action |

| 1 | Bidder enters a "Bid on Item" request | |

| 2 | FLAKE bids up the currentBid by looping through all active bidders on this item | |

| 3 | FLAKE waits for BidTimer to expire | |

| 4 | FLAKE notifies winning and losing bidders | |

Extensions | None | |

Variations | None | |

Priority | Critical | |

Response Time | Within 5 seconds of entry | |

Frequency | Approximately 100,000 times per day | |

Channels To Primary Actor | Interactive through a Web interface | |

Secondary Actors | None | |

Channels To Secondary Actors | N/A | |

Due Date | 1 Feb 2000 | |

Open Issues | None | |

Syntax Testing

Let's begin with the simplest kind of testing-syntax testing. When performing syntax testing, we are verifying that the use case description contains correct and proper information. We ask three kinds of questions: Is it complete? Is it correct? Is it consistent? In one project I worked on, more than half the use cases failed syntax testing. Should we proceed with further implementation and testing given that level of quality?

Oh, I almost forgot. And you must keep this a secret. You do not need to know the answers to any of these questions before asking them. It is the process of asking and answering that is most important. Listen to the answers you are given. Are people confident about their answers? Can they explain them rationally? Or do they hem and haw and fidget in their chairs or look out the window or become defensive when you ask? Now for the questions:

Complete:

- Are all use case definition fields filled in? Do we really know what the words mean?

- Are all of the steps required to implement the use case included?

- Are all of the ways that things could go right identified and handled properly? Have all combinations been considered?

- Are all of the ways that things could go wrong identified and handled properly? Have all combinations been considered?

Correct:

- Is the use case name the primary actor's goal expressed as an active verb phrase?

- Is the use case described at the appropriate black box/white box level?

- Are the preconditions mandatory? Can they be guaranteed by the system?

- Does the failed end condition protect the interests of all the stakeholders?

- Does the success end condition satisfy the interests of all the stakeholders?

- Does the main success scenario run from the trigger to the delivery of the success end condition?

- Is the sequence of action steps correct?

- Is each step stated in the present tense with an active verb as a goal that moves the process forward?

- Is it clear where and why alternate scenarios depart from the main scenario?

- Are design decisions (GUI, Database, …) omitted from the use case?

- Are the use case "generalization," "include," and "extend" relationships used to their fullest extent but used correctly?

Consistent:

- Can the system actually deliver the specified goals?

Domain Expert Testing

After checking the syntax of the use cases we proceed to the second type of testing- domain expert testing. Here we have two options: find a domain expert or attempt to become one. (The second approach is always more difficult than the first, and the first can be very hard.) Again, we ask three kinds of questions: Is it complete? Is it correct? Is it consistent?

Complete:

- Are all actors identified? Can you identify a specific person who will play the role of each actor?

- Is this everything that needs to be developed?

- Are all external system trigger conditions handled?

- Have all the words that suggest incompleteness ("some," "etc."…) been removed?

Correct:

- Is this what you really want? Is this all you really want? Is this more than you really want?

Consistent:

- When we build this system according to these use cases, will you be able to determine that we have succeeded?

- Can the system described actually be built?

Traceability Testing

Finally, after having our domain expert scour the use cases, we proceed to the third type of testing-traceability testing. We want to make certain that we can trace from the functional requirements to the use cases and from the use cases back to the requirements. Again, we turn to our three kinds of questions: Is it complete? Is it correct? Is it consistent?

Complete:

- Do the use cases form a story that unfolds from highest to lowest levels?

- Is there a context-setting, highest-level use case at the outermost design scope for each primary actor?

Correct:

- Are all the system's functional requirements reflected in the use cases?

- Are all the information sources listed?

Consistent:

- Do the use cases define all the functionality within the scope of the system and nothing outside the scope?

- Can we trace each use case back to its requirement(s)?

- Can we trace each use case forward to its class, sequence, and state-transition diagrams?

Conclusion

This set of questions, based on syntax, domain expert, and traceability testing, and focused on completeness, correctness, and consistency, is designed to get you started testing in an area with which you may not be familiar. Future articles will apply the same principles to testing sequence diagrams, class diagrams, and state-transition diagrams.

Good luck testing.

Other articles in this series:

Sequence Diagrams: Testing UML Models, Part 2

User Comments

Hi Lee,

In addition to this article, I recently purchased and enjoyed your book on “A Practitioner’s guide to software test design”. The techniques are really helpful! Is there a tool/software downloads/test management system available that encompasses ALL of the test design techniques discussed in the book?