Assessing an Organization’s Capability to Effectively Implement Its Selected Agile Method

Shvetha Soundararajan and Dr. James D. Arthur write that the agile philosophy provides an organization or a team with the flexibility to adopt a selected subset of principles and practices. However, more often than not, these customized approaches fail to reflect the agile principles associated with the practices.

The agile philosophy provides an organization or a team with the flexibility to adopt a selected subset of principles and practices. However, more often than not, these customized approaches fail to reflect the agile principles associated with the practices. Organizations often lack the supporting environment to effectively implement the adopted methods, which result in the benefits afforded by agile methods not being fully realized [1]. Our work is motivated by the need to help organizations determine the extent to which they support the implementation of a selected agile method. More specifically, we propose to assess the capability of an organization to provide the supporting environment to effectively implement an agile method. Agile adoption in an organization is guided primarily by its culture, values, and the types of systems the organization is developing. When an organization decides to adopt an agile method, we ask the following questions:

- Does the adopted agile method have the potential to satisfy the values of the organization? More specifically, does the method have the principles and practices in place to achieve the touted values?

- Does the organization’s culture of permit the adoption and application of the agile method? Does the organization’s environment have the capability to support the implementation of the method? For example, if the people in an organization are resistant to change, getting them to adopt agile methods can be a difficult undertaking.

Our assessment methodology is based on the recognition that any viable agile method reflects organizational objectives, asserts principles that support those objectives, and includes practices that embody those principles. To assess capability, we follow a twofold approach. Firstly, we evaluate the internal consistency of the agile method. That is, we assess the correspondence between the objectives, principles, and practices of the selected agile method. Clearly, those objectives should reflect the culture and values of the organization. Secondly, we examine the characteristics of the organization’s internal environment, namely its resources and competencies. In an organization, the characteristics of its people, the process that it adopts, and its projects are reflected in its internal environment. Hence, we identify observable characteristics of the people, process, and project associated with each practice. We then compute aggregated measures that indicate the presence or absence of the necessary resources and competencies.This article outlines our approach to assessing the capability of an organization to support the implementation of an agile method.

Overview

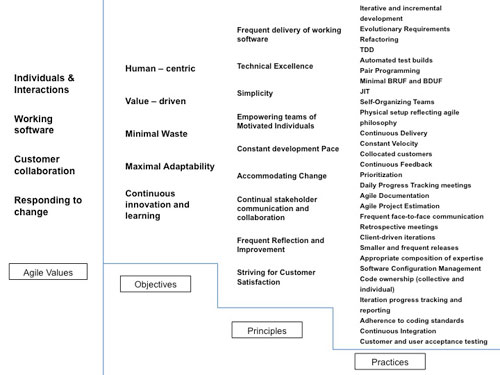

To guide our assessment, we propose the Objectives, Principles, and Practices (OPP) framework [2, 3]. Figure 1 shows the core structure of the OPP framework. The design of the OPP framework revolves around the identification of the agile objectives, principles that support the achievement of those objectives, and practices that reflect the “spirit” of those principles (figure 1). Well-defined linkages or relationships between the objectives and principles, and between the principles and practices are also established to support the assessment process. In figure 1, the arrows between the objectives, principles, practices, and properties depict the existence of linkages between the components.

Figure 1: Core structure of the OPP framework

Firstly, we assess the internal consistency of an agile method by traversing the linkages in a top-down fashion (figure 1). That is, given the set of objectives espoused by the agile method, we follow the linkages downward to ensure that the appropriate principles are enunciated, and that the proper practices are expressed. Secondly, we assess the adequacy of an organization to implement its adopted method by using a bottom-up traversal of the linkages. The bottom-up assessment, however, is predicated on the identification of people, process, and properties associated with each practice that attest to the support of that practice. As shown in figure 1, by following the linkages upward from the properties, we can infer the adequacy of the environment for supporting the use of proper principles and the achievement of desired objectives.

The OPP framework identifies a set of five objectives that reflects the agile values and a supporting set of nine principles. It also provides a consolidated set of practices that help realize the prescribed principles. Figure 2 shows the set of objectives, principles, and practices that are reflective of the agile philosophy. We note, however, that the list of practices presented in figure 2 is not necessarily exhaustive, and is expected to change over time. We also recognize that different practices can be used to achieve the same set of principles.

Figure 2: Objectives, principles, and practices identified by the OPP framework

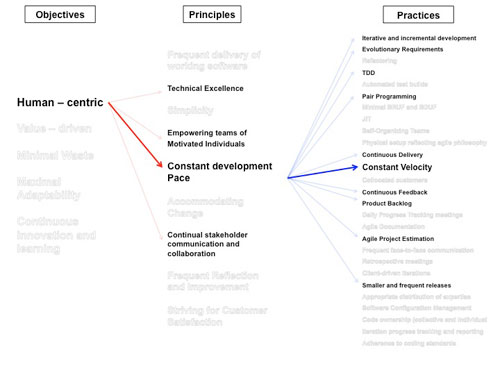

Currently, we have identified a preliminary set of linkages between the objectives and principles, and principles and practices. These linkages definitively identify the relationships among the components of the OPP framework. Consider the objective “Human-centric” (see figure 3) identified by the OPP framework. By “Human-centric” we mean that the people are more important than processes, practices, and tools. We conjecture that one of the principles that support the achievement of human-centricity is “Constant Development Pace.” In turn, from figure 3, we see that “Constant Velocity” is one of the practices that help realize “Constant Development Pace.”

Figure 3: Example linkages in the OPP framework

We have used learning, experience reports, white papers, books, etc., to identify all and confirm many of those relationships. We have currently substantiated approximately 60% of the identified linkages.

Assessing Internal Consistency of an Agile Method

The internal consistency of an agile method determines the sufficiency of that method to meet its stated objectives. That is, given a method’s objective, are the necessary principles also present that are prescribed by the framework? Then, for each principle enunciated by the framework, are the recommended practices touted by the agile method? If necessary principles and practices are missing, then the internal consistency is suspect. To assess the internal consistency of an agile method, say Method X, we ask the following questions:

1. Firstly, does Method X tout objectives that are consistent with those stated by the OPP framework?

Consistency is confirmed if the set of objectives touted by Method X is equal to or a subset of those expressed by the framework. We accept subsets because organizations often tailor their agile development approach to reflect cultural distinctiveness and to emphasize their business goals and values. We would question, however, any objective highlighted by Method X that is not one of those enunciated by the OPP framework.

2. Secondly, does Method X state principles that support the achievement of its touted objectives?

Using the set of objectives articulated by Method X, we first identify the same set embodied within the OPP Framework, and then follow the linkages from those objectives to the corresponding set of supporting principles. This set of principles is precisely that needed to support the realization of the objectives publicized by Method X. An inconsistency between the two sets of principles indicates a potential deficiency in the ability of Method X to achieve its stated objectives.

3. Finally, does Method X express practices that are the implementations of its stated principles?

We use the principles enunciated by Method X to identify a corresponding set of principles within the OPP framework, and then follow the framework linkages from its principles to a related set of practices defined within the OPP framework. This identified set of practices support the implementation of the principles to which they are connected. Hence, for Method X to necessarily implement the principles it enunciates, its touted set of practices must correspond to the set of OPP framework practices as determined above. An inconsistency between the two sets of practices indicates a potential deficiency in the ability of Method X to implement its stated principles.

Clearly, the procedure outlined above assumes that the objectives, principles, practices, and linkages identified by the OPP framework are, themselves, necessary and sufficient for such comparisons. As previously described, our effort to consolidate the works of many, and to substantiate the linkages prescribed within the OPP framework, provide evidence that an examination process like the above is justified. Nonetheless, we will also be the first to state that sets of objectives, principles, practices, and linkages are not intended to be closed set. As such, we continually revisit the composition of each.

Assessing the Extent to which an Organization’s Environment can Support Its Adopted Method

Now that we have examined the internal consistency of the adopted agile method, how do we determine if the organization has the supporting environment to effectively implement that method? The answer lies in examining the characteristics of the organization’s internal environment. In an organization, the characteristics of its people, the process that it adopts, and its projects are reflective of the characteristics of its internal environment. Hence, we use observable properties of the people, process, and project in our assessment of the adequacy of the environment. For example, the presence of open physical environments in an organization is indicative of the organization’s capability to foster face-to-face stakeholder communication and collaboration.

Let us again assume that an agile method, Method X, adopted by an organization touts the objective “Human-centric,” the principle “Constant Development Pace,” and the practice “Constant Velocity.” To assess the adequacy of the environment to support the implementation of “Constant Velocity,” we need to identify the observable properties associated with that practice. We identify observable properties by asking the following questions:

1. What special skills or knowledge do the people involved in the project need to successfully adopt and implement the practice?

2. What characteristics of the process and/or the environment extend support for the implementation of the practice?

3. Are there any project specific characteristics that support or impinge on the effective realization of the practice?

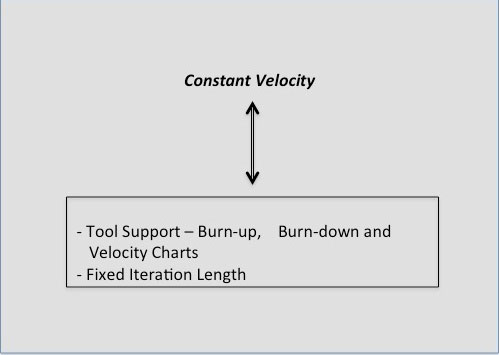

Asking these questions with respect to “Constant Velocity” provides us with a set of characteristics. Some of those characteristics are given below and also shown in figure 4:

- The existence of tool-support in the form of burn-up, burn-down, and velocity charts.

- The lengths of the iterations are fixed and are equal.

Figure 4: Properties associated with Constant Velocity

Once the observable properties are identified, our next step is to define assessment metrics for each (practice, property) pair. Aggregation of those metrics will be indicative of the capability of the organization to support the implementation of the practices. Finally, we need to determine the extent to which the principles touted by the method are supported by the organization. This is achieved by a further aggregation of the measures of the practices associated with each principle. Similarly, a further analysis from the principles to the objectives is carried out in order to assess the capability of the organization to support the achievement of the stated objectives. Our bottom-up approach to assessing the capability follows the process outlined by the Evaluation Environment (EE) methodology [4, 5].

Conclusion

Our approach to assessing the capability of an organization involves examining the internal consistency of the method, followed by determining the adequacy of the supporting environment to effectively implement its adopted agile method. Presently, we have a skeletal structure for our approach to assessing capability. To completely define our approach, we still need to identify the observable characteristics associated with each practice, and define metrics for assessing each (practice, property) pair. We recognize that the metrics to be defined for each indicator would yield values that maybe subjective, objective, numerical, binary, range values, etc. Hence, we need to map the different types of values obtained onto a uniform scale of measurement to perform the aggregation. However, we have not yet defined that necessary mapping approach. Current research is underway to identify an appropriate uniform scale of measurement. The EE Methodology suggests one viable approach.

References

[1]A. Sidky and J. Arthur, "Value-Driven Agile Adoption: Improving An Organization's Software Development Approach," presented at the New Trends in Software Methodologies, Tools and Techniques - Proceedings of the Seventh SoMeT, Sharjah, United Arab Emirates, 2008.

[2]S. Soundararajan and J. D. Arthur, "A Structured Framework for Assessing the "Goodness" of Agile Methods," in 18th IEEE International Conference and Workshops on Engineering of Computer Based Systems (ECBS), 2011 2011, pp. 14-23.

[3]S. Soundararajan, "A Methodology for Assessing Agile Software Development Approaches " Research Proposal Document, Computer Science, Virginia Tech, 2011.Available from: http://arxiv.org/abs/1108.0427 (CoRR).

[4]O. Balci, R. J. Adams, D. S. Myers, and R. E. Nance, "Credibility assessment: a collaborative evaluation environment for credibility assessment of modeling and simulation applications," presented at the Proceedings of the 34th conference on Winter simulation: exploring new frontiers, San Diego, California, 2002.

[5]M. L. Talbert, "A Methodology for the measurement and evaluation of complex system designs," Ph.D. Dissertation, Computer Science, Virginia Tech, Blacksburg, 1995.

Shvetha Soundararajan is a Ph.D. candidate in the Department of Computer Science at Virginia Tech. She received her Master's degree in Computer Science from Virginia Tech in 2008. Her research interests include Agile Software Engineering, Requirements Engineering, Software Architecture, Software Process Improvement, and Usability Engineering. Her current research involves assessing the "goodness" of agile methods. She is a member of Upsilon Pi Epsilon (Computer Science Honor Society) and the IEEE Computer Society.

James D. Arthur is an Emeritus Professor of Computer Science at Virginia Tech (VPI&SU). He received B.S and M.A. degrees in Mathematics from the University of North Carolina at Greensboro in 1972 and 1973, and M.S. and Ph.D. degrees in Computer Science from Purdue University in 1981 and 1983. His research interests include Software Engineering (Methods and Methodologies supporting Software Quality Assessment and IV&V Processes), Parallel Computation, and User Support Environments. Dr. Arthur is the author of over 30 papers on software engineering, software quality assessment, IV&V, and user/machine interaction. He has served as: participating member of IEEE Working Group on Reference Models for V&V Methods; Chair of Education Panel for National Software Council Workshop; Guest Editor for Annals of Software Engineering special volume on Process and Product Quality Measurement; and Principal Investigator or Investigator on 14 externally funded research projects totaling in excess of $1.4 million. Dr. Arthur is a member of Pi Mu Epsilon (Math Honor Society), Upsilon Pi Epsilon (Computer Science Honor Society), Golden Key National Honor Society, Sigma Xi (National Research Society), ACM, and the IEEE Computer Society.