Getting Started

Test professionals know that metrics are an important project deliverable. Developing a system to create, track, and report test results is a different story. The "whys" of test metrics have been covered before, so I'll instead show you how to produce the data to address those "whys." The data includes:

- Quantifying the test team's contribution to the project

- Showing a project's testing progress (Pass %)

- Developing product quality measures (Executed/Pass or Fail)

- Gathering historical data to predict future progress

There are, of course, limitations to any measurement system:

- For large projects, it is difficult to execute every test for every new iteration.

- Test quality will affect measurement quality.

- Varying levels of specificity may create false positives or negatives.

- Limitations of the test tools (measurement devices) can affect the accuracy of metrics.

You don't have to spend a lot of money to start a test metrics program; a spreadsheet-based system will do. There are benefits to commercial test management tools that should drive your decision. Even so, many of these tools use industry-standard databases that you can read from directly to create reports that meet your organization's needs.

What Data Do I Start With?

It's easy to get carried away with tracking, but there are only a few measurements needed to start a rich test metrics program. Start with the basics and determine which are most valuable and can be used to derive other metrics.

Make sure everyone in your organization uses the same terminology. Terms such as "% Complete" to describe the "Pass %" are inaccurate unless the definitions are clear. For this article, let's use these definitions:

Term | Definition |

| Test | An instruction to perform a particular action with a clear purpose, assumed setup parameters, and expected results |

| Test Execution | Performing a test or test group |

| Test Status | The results of a test when executed |

Let's keep to a few simple and well-understood measurements:

Term | Definition |

| Pass | This test passed during this test execution. |

| Fail | This test failed during this test execution. |

| Not Executed | This test was not executed during this test execution. |

These measurements are the most basic we can track when executing a test. They can be tallied along with our total number of tests to form percentages.

Tracking Progress

Figure 1: Set of tests and its execution status

We have determined the total number of tests, their results, and the Pass %, Fail %, and Not Executed % using these simple formulas:

Label | Calculation |

| Total Tests | A count of all tests to be measured |

| Total by Test Status | The count of tests by their status |

| Pass % | Percentage of tests in Pass status

|

| Fail % | Percentage of tests in Fail status

|

| Not Executed % | Percentage of tests not executed

|

The Daily News--Summarizing What Testing Accomplished Today

We've uncovered some useful information. At the time that this report was generated, the Pass % was 55% and 27% of the tests hadn't been executed. There should be several issues in the defect management system to record the failures.

Anyone involved can get a quick idea of the current testing status. Combining this with previous project reports, the project team can see what happened yesterday and forecast what may happen tomorrow.

By managing the reporting and raw data separately, we can add more tests and still get the data we need. Let's use an example where "Setup" is the functional area being tested (see Figure 2).

Figure 2

This allows the report to show information for specific areas of the project and provide more granular information. Now let's manipulate the data a bit to glean other information. To reflect the complexity of actual product test efforts, the Dialing test area has been added and a Summary created.

Figure 3: Total Executed and Executed & Pass has been added to provide data regarding product quality.

Term | Definition |

| Total Executed | Total tests that have been executed at least once |

| Executed & Pass | Percentage of the Total Executed that have passed |

| Test status | The results of a test when executed |

Label | Calculation |

| Total Executed | Pass + Fail = Total Executed |

| Total Executed % | Standardizes Total Executed as a percentage of the test executed

|

| Executed & Pass |

As the project progresses, the Total Executed % should increase. If not, check the following:

- Are testers not receiving new code or an improved product?

- Are more test cases being added? If so, why weren't they written sooner?

- Are requirements changing?

- Are testers testing?

Executed & Pass should also increase over the course of the project. Observing historical data, you should be able to predict what the Total Executed and Executed & Pass levels will be based on the expected quality of product.

Managing the data

Basic test management and reporting can be accomplished with a spreadsheet. Tests can be recorded by area and reported on as frequently as you like. There are some inherent limitations (e.g., a tester needs to actively manage each test version).

This system is good for a single tester or a few testers with well-defined responsibilities. It can be modified to be as simple or complex as required, referencing external spreadsheets and summary reports.

What about larger testing efforts? Most of the reporting here is based on data collected by a commercial test management system. That system failed to provide useful reporting, so we built our own. Since the tests and results were captured in a database, we continued to use spreadsheets. Using information from databases, intermediary applications, and spreadsheets, we created a reporting system that was accurate and unchallenged.

Weighting

The volume of data presented should also demonstrate another useful point: weighting. In most cases, it's safe to assume that areas with larger volumes of tests are more important (Regression testing efforts often have large test volumes but aren't considered a top priority). Given the mathematical nature of the data, weighing the tests to provide accurate reporting for your organization should be straightforward. Document the process so your readers understand the significance.

Reporting Intervals

It is important to find a time-based reporting pattern. My organization developed an application that queried the data from the database several times a day, and the end-of-day report was considered the report of record. Daily reports may provide too much information for your project.

The Week in Review--Summarizing the Project's Progress

You're generating volumes of data at least daily and are more aware of the testing effort. Now begins the real use of the data--generating theoretical possibilities and testing targets.

Once a system has been created for the daily report, this information needs to be put into perspective. Telling the project team you've passed 45% of the tests is meaningless without context (e.g., if the team can see that yesterday's testing progress showed 55% Pass and today's is 45%). Consolidate each daily report into a project summary.

What is transferred from the daily report to the project summary? The number of tests for each area and total number of tests are recorded. Since adding and removing tests affects the Pass % (and all other percentages), it helps to see what changed during the course of the project and how that affected the testing effort. Requirement changes, scope creep, removed functionality, and epiphanies by the tester all can cause a change in test volume. It's also important to map these events back to the changes they caused, so fluctuations can be accounted for and those changes avoided-or at least understood-in the future.

The Pass and Executed tests are added to help chart changes in those values. Fail and Not Executed aren't important enough to transfer, since the most significant information needed is the Pass % (If the Pass % isn't approaching 100%, there's a problem). Executed % and Executed & Pass % are transferred to see how they trend over the course of the project. Graphing all this data makes it a bit easier to analyze.

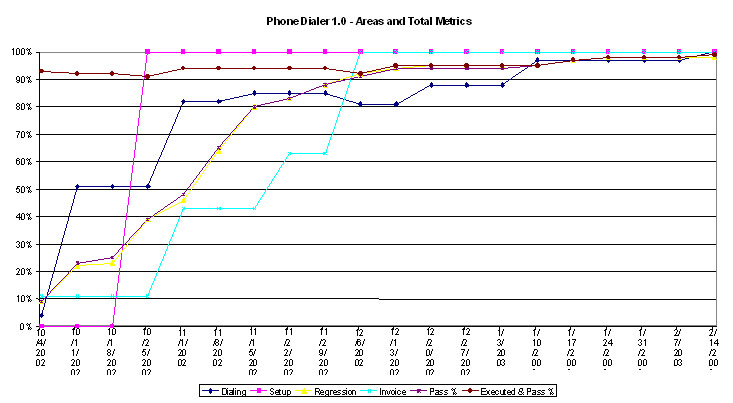

Figure 4

Figure 4 summarizes all the data that we've discussed up to this point graphed for quick reference and comparison to the project progress. The Pass % for each area is displayed as well as the overall values for Total Pass % and Executed & Pass %. As you can see, tremendous progress was made at the beginning of the project. It then took half of the project timeline to pass the last 10% of the tests! It turns out that this was a pretty typical observation. We can also see that the test results for Dialing on 12/6 were lower than the last report on 11/29. This is important to the part of the team working on Dialing, but the volume of tests in the Regression area of the project had more weight on the total project progress and pushed the Total Pass % up.

Conclusion

Metrics can help explain project progress. You now should have enough information to begin some basic test project tracking. In Part 2, we'll discuss applying what we've learned to future projects.

Click Here to Read Practical Test Reporting--Part 2

User Comments

Very interesting and educative article - Thank you.

Very interesting and educative article - Thank you.

Very interesting and educative article - Thank you.

Very interesting and educative article - Thank you.

Very interesting article..Thanks