When I first started coaching agile teams I found it a challenge to coach testers. This was understandable because the agile movement was really developer-centric and much of the resource material reflected that. I spent time talking with skilled agile testers and watching them in action. A key thing I found was that they pose questions that no one else thinks to ask. In this article we will explore those questions and the context in which they are asked.

First of all, what do I mean by testing? I like James Bach's definition below because it suggests that testing is much more than a suite of test cases:

"Testing: Questioning a product in order to evaluate it."

I find that good testers don't just question the final product. They question the product before work has even started and while it is being developed. And they also challenge the team itself. While this is true of many traditional testers, I have found these lines of questioning to be more prevalent on agile teams because a "whole team" mentality is encouraged and quality is a team responsibility.

The Agile Testing Matrix

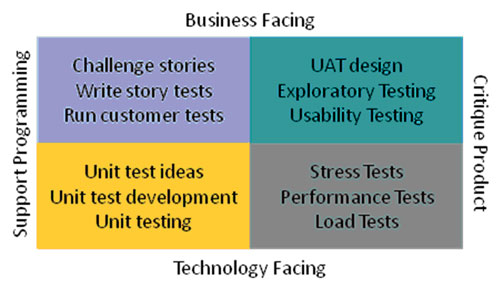

| There are a lot of questions a tester can ask. We will use Brian Marick's agile testing matrixto help frame them.

I have added some typical test activities that could take place in each quadrant.

|

|

| I will take a slight liberty with Brian's matrix by using "Develop Product" instead of "Support Programming" as an axis.

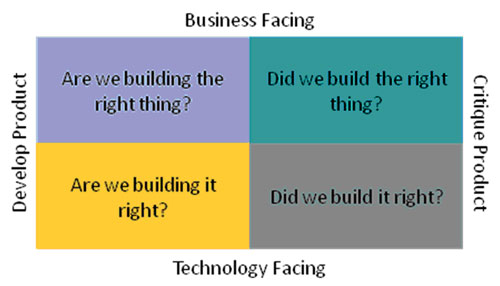

Now we can frame the meta questions that drive effective agile testing.

|

|

It should be apparent that the activities and questions in the right-most quadrants are equally valid on traditional and agile projects. So there is not a lot new here for testers on agile teams. The main difference is that these activities run throughout the product life-cycle rather than being phased as post-development work.

Are we building the right thing?

Programmers are often inwardly focused asking the question "How can I make this work?" Testers balance this perspective with a more customer-centric view by asking outward facing questions to the product owner during iteration planning:

| What benefit would users get from this story? | This helps ensure that the story is delivering tangible value. The benefit can be captured using the template first suggested by Rachel Davies: As a I want to So that I can . |

| How would we know this story is working? | Asking this aligns team as to how the new capability is intended to work. This in turn assists the team in identifying and estimating tasks. Examples of successful completion can be captured in story tests as "happy paths". |

| What are alternate ways this story could work? | Considering alternate ways that a story can unfold keeps the team alert to alternate solutions and prevents them from tunneling in on solutions too early. This question may also identify hidden complexity which will help scope the work more accurately. Examples of alternate paths can be captured in story tests as "alternate paths". |

| In what ways could this story fail? | Helps the team consider failure scenarios which they may not have considered. This helps bound the problem and eliminate confusion as to what is in and out of scope. Examples of failure scenarios can also be captured in story tests as "sad paths". |

Are we building it right?

The intent of questions here is to help instill a quality mindset in the team by finding and preventing defects while work is underway. The focus is on helping the team to get stories "done, done" meaning that that the story is fully complete and ready to deploy. The questions here promote interactions with the entire team.

| Can we try it together before we check it in? | This question realizes the benefits of focused exploratory testing before code is checked in. By pairing with developers, testers can share and promote good test practices. Insights gained through exploratory testing can also lead to better automated story and unit tests. |

| Does the happy path work" | While all paths are important, it is counter-productive to focus on alternate or sad paths before the primary happy path is working. Agile testers answer this question before testing any further. |

| Is this a problem? | A tester will often highlight an anomaly to the team in the form of a question. This helps avoid a developer-tester blame game and opens the door to an open dialog on the story. The result of this question should be a shared understanding of whether the anomaly is a new bug, a known bug or perhaps intended functionality. |

| Can we fix this now? | It is usually in the best interests of the team to fix newly found defects as soon as possible. The result of this question should be either: · an immediate pairing session with the developer to fix the defect OR · a defect card if it can't be fixed right away but can be within the sprint OR · a defect story being added to the product backlog |

| Is this story done? | A key shift for testers in this quadrant is moving away from the question "Is this potentially shippable?" towards "Did we meet the intent of this story?" The shippable questions are asked and answered in the right quadrants. |

Did we build the right thing?

Exploratory testing focuses on asking questions that trigger discovery. Context driven testing principles and session-based test methods provide an effective framework for asking exploratory questions and sharing the discoveries. I have found little value in scripted manual tests because automated unit tests and story tests provide a more effective regression suite. More importantly, session-based exploratory testing is more effective at finding defects. Outputs from exploratory sessions provide the team with a deeper awareness of the system strengths and weaknesses.

The table below is a small sample of questions that could be asked in exploratory test sessions. For a starting point on a more complete list of testing heuristics talk check out the Test Heuristics Cheat Sheet by Elisabeth Hendrickson, James Lyndsay and Dale Emery. Better yet, talk to a skilled exploratory tester.

| How might a different user use the system? | Ask yourself how a user other than the primary user for the story might use the feature. Elisabeth Hendrickson suggests adopting "extreme personae" to stress system usage. Jean McAuliffe provides some good persona suggestions in her Lean-Agile Testing Seminar although I really like the Bugs Bunny and Charlie Chaplin personaesuggested by Brian Marick. |

| How could the system be used in different scenarios? | Try using the system in different non-obvious scenarios. Some scenarios may simply be different states such low CPU, memory, disk space or starting after a network or power outage. You may also want to consider more extreme "soap opera" scenarios first suggested by Hans Buwalda. Michael Bolton suggests a possible soap opera example that will give you a flavor for the line of thinking. |

| How does the system behave at boundary conditions? | Test the system at values below, at or just above boundary conditions. For example, does the system work when I paste 255, 256 or 257 characters into a text box? |

Did we build it right?

This is very much "traditional" testing applied to para-functional aspects of the system like performance, reliability and so on. Again, the questions below are a small subset of what should be asked.

| Is the response time of the system acceptable? | Initial answers to these questions set performance baselines. Subsequent testing provides information for trending which allows us to detect changes in performance quickly. |

| What are the bottlenecks in the system? | Once performance baselines are established additional load can be applied to the system until response times are unacceptable. Through the use of tools the tester can provide the team with insights that allow the system to be tuned. |

| What are the largest loads that the system can handle? | The questions here are typically extreme like "What is the largest file the system can handle?" or "What is the maximum number of concurrent users the system can support?" or "How long can the system run at maximum load?" |

| How well does the system recover when over-stressed? | Here we are questioning how well the system reacts to stress and how well does it recover when over-stressed? |

Conclusion

| Agile testers add considerable value to agile teams by asking the right questions and radiating answers back to the team

|

|

There are a few key points I would like to make:

- Agile testing in the left-most quadrants requires strong team collaboration. This adds significant value by helping the team get to "done, done", preventing defects, and finding other defects earlier.

- While operating in the left-most quadrants the testing mindset needs to be "Are we done?" rather than "Is this shippable?"

- Traditional testing techniques will work very well on agile teams when operating in the right-most quadrants. The main shift for testers will be to pragmatically automate some of this work to support continuous testing and to radiate information back to the team on a regular basis.

- Session-based testing works well with agile teams.

- Manual scripted test cases have little value on most agile teams.

About the Author