In his CM: the Next Generation series, Joe Farah gives us a glimpse into the trends that CM experts will need to tackle and master based upon industry trends and future technology challenges.

There are some common sense strategies that can be employed to help ensure good requirements. For example, the development team should clearly understand both the customer's business and the industry, along with its process requirements. This will involve working closely with the customer and with industry experts.

Another concept is to never take a customer's requirements at face value. The customer may lack expertise in some areas, resulting in loose or inadequate requirements. More frequently, you may have more advanced expertise about the industry or technology and how it is advancing. You may also have experience on your side from working with other customers on similar requirements. Passing this feedback to your customers will help you to establish trust and may open the door for use of more generic product components to fulfill what otherwise might have been a more difficult set of requirements.

A third point is to realize that the customer's understanding of the problem space will change over time, for various reasons. By adapting a process that supports change, you'll remove a number of obstacles as your customer comes at you with requirements creep.

An Incomplete Requirements Spec

Requirement specification is an inexact art. It's difficult and at best you'll only specify a partial set of requirements prior to the start of development. You may think you have them down exactly, but read through your requirements document and you'll find something missing, or something that can be more completely specified. Perhaps for the design of a bolt, a window, or even a bicycle you might be able to fully and completely specify the requirements up front. But move to an aircraft, a communications system or even a PDA, and things get more difficult. There are several reasons for this.

First of all technology is moving forward rapidly. Availability of a new component may heavily influence requirements. Instead of asking for a low cost last mile copper or fiere connection, one might ask for wireless access for the last mile - dramatically improving costs. Instead of a 100MB mini-hard drive, one might instead look at a 64MB SD card or thumb drive that can dramatically improve reliability while eliminating some of the damping features to guard against sudden impact.

Second of all, product development has moved from a "build this" to a "release this" process, fully expecting that new releases will address the ever-changing set of requirements. In fact, one of the key advantages of software is that you can change your requirements and still meet them after delivery. For example, it's nice that the Hubble telescope was originally designed to work with 3 gyroscopes, but it was even nicer that in 2005 a software change allowed it to run with just 2 gyroscopes. And engineers have another change that will allow it to work with just a single working gyro. One of the key requirements coming out of this demonstration is flexibility. You often want your products to be flexible enough to be adapted after production. We've seen this with modems, cell phones and other common products where change was rapid over the years. The focus moves from ensuring that you have all of the right features, to ensuring that over time you can deliver more features to existing product.

Third, systems are more interconnected these days. So you want this PDA to work with this office software, that communications software to use a particular set of protocols, etc. The result is that one has an endless wish list of interface requirements, ordered by what the real market demand is.

Quality Requirements

What makes a good requirement? Sometimes it's easy. For example, a new C compiler might have a requirement that it compile all existing GNU C programs. Or a new communications system must be able to communicate with existing Bell trunk lines. An airplane's navigation system must be able to work with existing air traffic control systems.

These easy requirements typically owe their ease of specification to the fact that there is an accepted standard already in place with which it must comply. Standards are really a type of requirement. A new product design may choose to have a specific standard compliance as a requirement, or not. The standards themselves often go through years of multi-corporate evolution.

More generally, if you want a quality requirement, you really need to look at two things: Can it be clearly and completely expressed and is it testable?

If you can take your requirements and write test cases for them, you're more than half way there. In fact, one of the benefits of standards is that they often have full test suites associated with them. Even if they don't, plugging them into the real world provides a very good test bed, when that can be done safely! This is closely related to the problem reporting axiom: most of the work in fixing a problem is in being able to reproduce it. When you can reproduce a problem, you have both a clear specification of the problem, and a means of testing the fix. It's the same with requirements, express it and write a test case for it and you've don't most of the work, usually.

How Can We Help

How can the CM/ALM profession help with producing quality requirements? By providing tools that manage change to requirements, and that manage test case traceability.

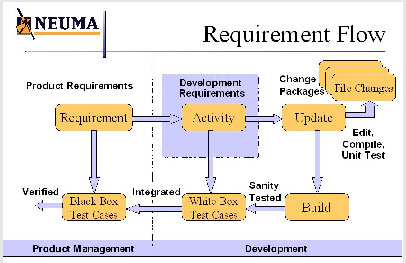

Let's take a look at the whole picture. This is what a typical requirements flow looks like.

There are typically two different ways of dealing with requirements. One is to call customer and product requirements “requirements” and to call system and design level requirements “activities” or “design tasks”. The input requirements from product management are known as the requirements while those things that the design team has authority and control over are known as activities/tasks. In this scenario (shown in the diagram), requirements is used to denote a set of requirements on the product development team.

The other way of dealing with requirements is simply to treat requirements at different levels, based on the consumer of the requirements. A customer requirements tree is allocated to the next level as a product requirements tree, which is allocated to the next level as a system requirements tree, which is allocated to the design requirements tree. Each level has a different owner and a different customer. The actual levels, and their names, may differ somewhat from shop to shop. The traceability from implementation is from level to level and each level must completely cover off the requirements of the preceding level.I don't view these as two different ways of working, but rather two different ways of identifying requirements and design tasks. Both require full traceability and both track the same information with respect to requirements. I prefer the former because the type of data object and authority exercised over product development team tasks, is very different that for customer/product requirements. While the development team may have some input and interaction with the customer and product management in establishing the customer/product requirements, it is there that a contractual agreement is made: the

team commits to a set of requirements in a given timeframe.

After, the allocation of requirements to tasks are usually organized to suit the development methodology, Agile, Waterfall or otherwise. A series of work breakdown structures [WBS] (or perhaps one large one) is used to manage the tasks required to meet the commitment. In my books, it's preferable to have the system/design level requirements tracked as the same object that project management is going to use in its WBS. There is a whole set of project management information tracked against these requirements that are not tracked against the customer/product requirements. Either way, this works as long as the lines of authority and the required information are respected.

As a CM/ALM provider, we want to be able to manage changes to the requirements at all levels. We also want to be able to track and support full traceability, not only to the test cases, but to the very test results themselves.

Managing Requirements

To manage requirements we need to look at the process in the small and in the large.

In small, requirements will change. Because of this, we would like to have revision control exercised over each requirement. In that way we can see how each requirement has changed. However, just like for software, a series of requirements will change together based on some input. So we also want to be able to track changes to requirements in change packages/updates that package multiple requirement modifications into a single change. We may want traceability on such a change back to the particular input that caused the change. The CM/ALM provider must provide both of these functions, with as much ease-of-use as possible.

In large, we have to understand that change will continually happen. However, we don't want to be jerking the development team around every time a requirement changes. So instead, we deal with releases of a requirements tree. Perhaps this is done once per significant product release; or maybe it's done for each iteration or every other iteration of an agile process. A good rule of thumb is to look at product releases in general. There are new features that you'll target for the next release, and there are some things that really have to be retrofitted into the current release.

A development team can deal with occasional changes to their current project schedule, but don't try to fit all of release 3 into release 2. Create an initial requirements tree for release 2 and baseline it. Review it with the development team so that they can size it and give you a realistic estimate on the effort required. Go back and forth a couple of times until you reach a contract between product management and the development team. Baseline it again and deliver the release 2 spec to development. Everything else goes into release 3. If it truly cannot wait, you then need to renegotiate the contract, understanding that either some functionality will be traded off, or deadlines modified. Don't expect that more resources can be thrown at the project, as it won't work. Your process should allow only one or two of the must change negotiations in a release, and they need to be kept small. If not, you will need to completely renegotiate your release 2 content and contract, after agreeing to first absorb costs to date. Remember, if you reopen the can of worms, you may never get it closed again and the costs are already incurred and will continue to be incurred until such time as you reach a new agreement or call off the release.Now if you happen to have an integrated tool suite that can tell you easily where you are in the project, what requirements have already been addressed or partially addressed, etc. it may give you some leverage in telling the customer that they should just wait for the next release for their change in requirements. This is especially the case if you have a rather short release cycle. An iterative agile process may proceed differently so as to maintain flexibility to the customer's feedback as they develop. However, this is really just dealing with smaller requirement trees in each iteration.

I would caution against dealing with one requirements tree per iteration however, as much advanced thinking is accomplished by taking a look at a larger set of requirements and after letting them soak into the brain(s) sufficiently, coming up with an architecture that will support the larger set of requirements. If the soak time is insufficient, or the large set is unknown, it's difficult to establish a good architecture and you may just end up with a patchwork of design.

Whatever the case, if both customer and product team can easily navigate the requirements and progress, both relationships and risk management will benefit. Make sure that you have tools and processes in place to adequately support requirements management. It is the most critical part of your product development, culminating in the marching orders.

Traceability to Test Cases and to Test Results

Test cases are used to verify the product deliverables against the requirements. When the requirements are customer requirements or product requirements, the test cases are referred to as black box test cases, that is test cases that test the requirements without regard to how the product was designed. Black box test cases can, and should, be produced directly from the product specification as indicated by the product requirements.

When the requirements are system/design requirements, the test cases are referred to as white box test cases, because they are testing the design by looking inside the product. Typically white box test cases will include testing of all internal APIs, message sequences, etc.

A CM/ALM tool must be able to track test cases back to their requirements. Ideally, you should be able to click anywhere on the requirements tree and ask which test cases are required to verify that part of the tree. Even better, you should be able to identify which requirements are missing test cases. This doesn't seem like too onerous a capability for a CM/ALM tool, until you realize that the requirements tree itself is under continual revision/change control, as are the set of test cases. So these queries need to be context dependent.

Going one step further, if the CM/ALM tool is to help with the functional configuration audit, the results of running the test cases against a particular set of deliverable (i.e., a build), need to be tracked as well. Ideally the tool should allow you to identify which requirements failed verification, based on the set of failed test cases from the test case run. It should also be able to distinguish between test cases that have passed and those that have not been run.

More advanced tools will allow you to ask questions such as: Which test cases have failed in some builds, but subsequently passed? What is the history of success/failure of a particular test case and/or requirement across the history of builds?

With change control integrated with requirements management, it should be relatively straightforward to put together incremental test suites that run tests using only those test cases that correspond to new or changed requirements. This is a useful capability for initially assessing new functionality introduced into a nightly or weekly build.

The ability to manage test cases and test results effectively and to tie them to requirements is will result in requirements which are of higher quality. The feedback loop will help to ensure testability and will uncover holes and ambiguities in the requirements.