|

7 Agile Testing Trends to Watch for in 2020 With 2020 upon us, software development firms seeking to increase their agility are focusing more and more on aligning their testing approach with agile principles. Let’s look at seven of the key agile testing trends that will impact organizations most this year.

|

|

|

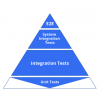

The Eroding Agile Test Pyramid The test pyramid is a great model for designing your test portfolio. However, the bottom tends to fall out when you shift from progression testing to regression testing. The tests start failing, eroding the number of working unit tests at the base of your pyramid. If you don't have the development resources required for continuous unit test maintenance, there are still things you can do.

|

|

|

Requirements Mapping Using Business Function Test Suites On this team, testers were overcommitted, avoidable defects were surfacing, and documentation was hard to find. Worse, trust and morale were low. Upgrading tools was out of the question, so the testers decided to take matters into their own hands and create incremental change themselves. Here's how a team added a new type of traceability to its requirement test case world.

|

|

|

4 Strategies for a Structured QA Process Being a software tester is no longer just about finding bugs. It is about continuous improvement, defining a clear test strategy, and going that extra mile to improve quality. Following a consistent, structured approach to QA will help you acquire more knowledge about the product you are testing, ask questions you otherwise may not have thought of, and become a true owner of quality.

|

|

|

QA Is More Than Being a Tester QA testers often take on more of a role than just testing software code. When the team needs help, QA should lend a hand in assisting with business analysis, customer communication, user experience, and user advocacy.

|

|

|

The Unspoken Truth about IoT Test Automation The internet of things (IoT) continues to proliferate as connected smart devices become critical for individuals and businesses. Even with test automation, performing comprehensive testing can be quite a challenge.

|

|

|

Test-Driven Service Virtualization Because enterprise applications are highly interconnected, development in stages puts a strain on the implementation and execution of automated testing. Service virtualization can be introduced to validate work in progress while reducing the dependencies on components and third-party technologies still under development.

|

|

|

Adopt an Innovative Quality Approach to Testing How much testing is really enough? Given resources, budget, and time, the goal of comprehensive testing seems impossible to achieve. It’s time to rethink your test strategy and start innovating.

|

|

|

Testing as a Craft: A Conversation with Greg Paskal

Podcast

Greg Paskal, evangelist in testing sciences and lead author for RealWorldTestAutomation.com, chats with TechWell community manager Owen Gotimer about testing as a craft, choosing the right test automation tools, and current testing trends around the world.

|

|

|

Embracing Tools and Technology in QA: An Interview with Melissa Tondi

Video

In this interview, Melissa Tondi, senior QA strategist at Rainforest, discusses the foundation you need in order to have a positive introduction for new tools and technologies. She explains why the team leader has to understand what motivates each individual and how to get them excited about their job. Melissa says team members also have to realize that if they are in any way involved in testing software, they are a technologist, so they have to embrace the tools and technology that will continuously improve and streamline repetitive tasks.

|

|

|

Strategic Leadership in Agile: An Interview with Bob Galen

Video

In this interview, Bob Galen, principal agile coach at Vaco Agile, talks about the importance of getting rid of silos by breaking down the barriers of “them and us” and becoming “we.” He also discusses the need for agile managers to steer away from a tactical management view toward a more strategic leadership view. That means leading their teams by setting expectations and guidelines and being available to help if needed, but ultimately just trusting their teams to get the job done.

|

|

|

What Testers Can Learn from Airline Safety Improvements: An Interview with Peter Varhol and Gerie Owens

Video

Technologist and evangelist Peter Varhol and Gerie Owens, a test architect and certified ScrumMaster, discuss their STARWEST presentation, “What Aircrews Can Teach Testers about Testing.” They talk about how testers can apply airline safety practices to their teams’ delivery of high-quality applications through complementary expertise, collaboration, and decision-making. They also explain how blind deference to authority and automation can be detrimental to a testing team, and how to use everyone’s skills to achieve success.

|

|

|

Providing Value as a Leader: More Than Just Being the Boss

Slideshow

Being a test manager is more than just being the boss. Sure, there is direction to set, issues to address, hiring, performance reviews, and status updates.

|

Jeff Abshoff

|

|

3 Steps to QA Success from a VP of Quality Assurance

Slideshow

Are you a leader with a quality problem? Every organization struggles with quality at some point in their product lifecycle. Knowing what to measure and how to build a culture of quality with specific and actionable methods is key.

|

Karen Holliday

|

|

What's That Smell? Tidying Up Our Test Code

Slideshow

We are often reminded by those experienced in writing test automation that code is code. The sentiment being conveyed is that test code should be written with the same care and rigor that production code is written with.

|

Angie Jones

|

|

Testing in Production

Slideshow

How do you know your feature is working perfectly in production? And if something breaks in production, how will you know?

|

Talia Nassi

|

Visit Our Other Communities

AgileConnection is a TechWell community.

Through conferences, training, consulting, and online resources, TechWell helps you develop and deliver great software every day.